Beyond the Image: Emerging Technologies & Chandra

Kimberly Arcand, Megan Watzke

AI-Driven Alt Text

In addition to its role in high-energy astrophysics research, the Chandra X-ray Center has invested in developing new methods for communicating complex scientific results to broad audiences. One of our recent communications projects explores how machine learning (ML) can make Chandra's extraordinary imagery more accessible. The project, submitted to the Frontiers AI and Communication special collection through a paper entitled “Accessing the Universe via Algorithm: Automating Alt-Text Generation with NASA Data and AI,” investigates how large language models (LLMs) and vision-language models can help generate high-quality alt-text—the brief, descriptive text that makes images interpretable by screen readers for blind and low-vision (BLV) users.

We tested two complementary models: the InstructBLIP vision-language model, which interprets images directly, and OpenAI's GPT-3.5 Turbo, which generates text based on image metadata. Our goal was to create a semi-automated pipeline capable of producing accurate and engaging image descriptions for Chandra's public image archive in bulk. The workflow combined metadata-driven prompting, automated fact-checking, and rubric-based evaluation, followed in most cases by brief human moderation (typically two minutes per image).

The results were promising. Our system produced mostly coherent, informative alt-texts that captured key astronomical details while also significantly reducing the generation time. For comparison, writing a complete, high-quality alt-text manually can take an hour or more. We partnered with BLV experts from 2021 through 2026 to create human-generated alt-text for our publicly-released Chandra images, but it was too time- and labor-intensive an approach for the twenty-year image backlog.

With this AI-assisted workflow, the process is much faster and takes minutes. Beyond efficiency, the study revealed insights into how AI “hallucinates” details, how factual accuracy can be validated automatically, and how rubric-based evaluation can help identify strong descriptions, with the Chandra public image archive providing a microcosm that neatly mirrored existing challenges in ML projects.

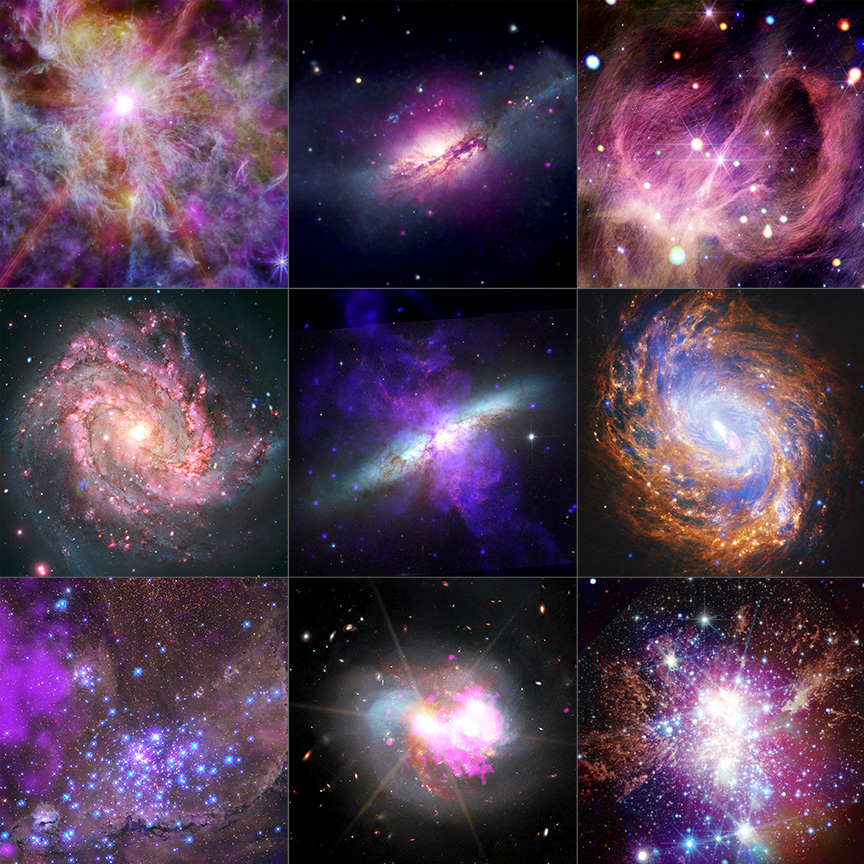

A selection of just a handful of Chandra's approximately 1,000 publicly released images available at chandra.si.edu. Thanks to our comprehensive alt-text project, all have alt-texts available.

All Chandra images released on chandra.si.edu—whether from 1999, 2026, or anywhere in between—are now fully described with informative alt-text. As the volume of scientific imagery continues to grow, multi-tiered access cannot remain an afterthought; automating portions of the alt-text process allows teams to scale inclusion alongside data. This approach not only benefits BLV users, it also improves discoverability, metadata consistency, and overall public engagement with NASA science across bandwidths, learning abilities, and expertise. The study underscores that responsible AI use, guided by human expertise, can advance access to NASA data while maintaining Chandra's high scientific and communicative standards.

This project is part of a broader exploration at the Chandra X-ray Center into how emerging technologies—from AI-assisted sonification to 3D modeling—can enhance how we perceive and share high-energy astrophysics. Future work will refine these methods, expand to other NASA datasets, and explore multilingual and other audience-based applications. As AI continues to evolve, so too does our ability to make the invisible universe accessible to everyone.

Spatial Sonification in XR

The intersection of public engagement and emerging technologies, however, does not end with AI. Rather it is just the beginning.

As Chandra News readers will know, astronomical datasets are becoming increasingly complex, often spanning multiple dimensions and wavelengths that can be difficult to interpret through images alone. This is one of the reasons why Chandra has been at the forefront of exploring new modes of reaching audiences—ranging from data-based 3D modeling and printing to the virtual worlds of extended reality.

The Chandra communications and public engagement (CPE) team has also led the development of sonification—the translation of data into sound. While visualization remains central to both scientific analysis and public engagement, it can present barriers related to perception, accessibility, and cognitive load. Sonification offers a powerful complementary approach, but it has traditionally relied on non-spatial mappings that do not fully take advantage of how humans perceive sound, especially in immersive environments.

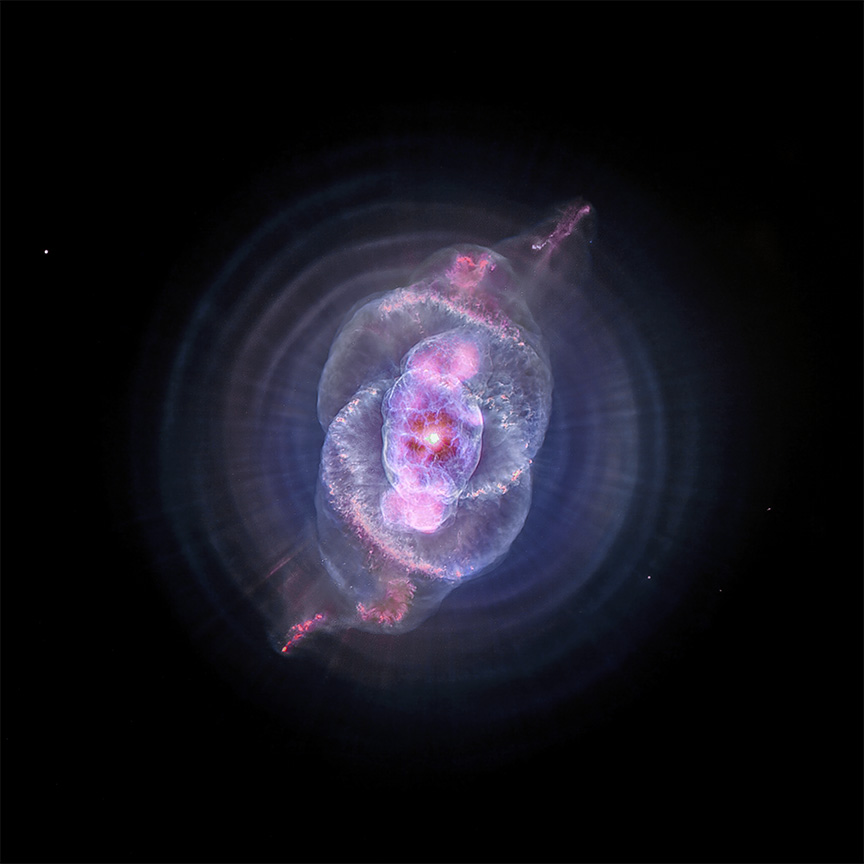

Bringing together our various skill sets and areas of expertise, the CPE team is developing an extended reality (XR) spatial sonification experience using NASA data of the Cat's Eye Nebula (NGC 6543) from the Chandra X-ray Observatory and the Hubble Space Telescope. In this experience, participants navigate the nebula not only visually, but also through sound. Three-dimensional scientific data describing the object's nested shells, filaments, and asymmetries are mapped to spatialized audio cues, allowing users to perceive depth, orientation, and structure through movement and interaction.

Cat's Eye Nebula in 2D from Chandra & Hubble (Credit: X-ray: NASA/CXC/SAO; Optical: NASA/ESA/STScI; Image Processing: NASA/CXC/SAO/J. Major, L. Frattare, K. Arcand)

Implemented within an XR environment, the experience allows the user to hear sound not just as they move around virtually within the object but also as they change the orientation of their head, transforming the nebula into a navigable sound field. The Cat's Eye Nebula is an ideal case study for this approach given the complexity and layers of its 3D model. This unique structure creates sounds through different positioning and motion, expressing structure in ways that static images cannot. In short, by putting sound at the center of the experience, this work is expanding how people can experience and understand complex structures in space.

The future

Together, these projects illustrate how emerging technologies can extend the reach and impact of Chandra (and NASA) science beyond traditional visual paradigms. From AI-assisted alt-text that improves access, discoverability, and reuse of astronomical data to immersive spatial sonification experiences that allow audiences to navigate complex 3D structures through sound, Chandra sits at the intersection of machine learning, multimodal data representation, and inclusive science communication.

By thoughtfully integrating human expertise with new technological tools, the Chandra X-ray Center continues to explore how high-energy astrophysics can be experienced across abilities, bandwidths, learning styles, and levels of expertise—ensuring that access to the Universe expands even as the data evolve.